TryHackMe SAL1 - Passed First Attempt, Here's What Actually Matters

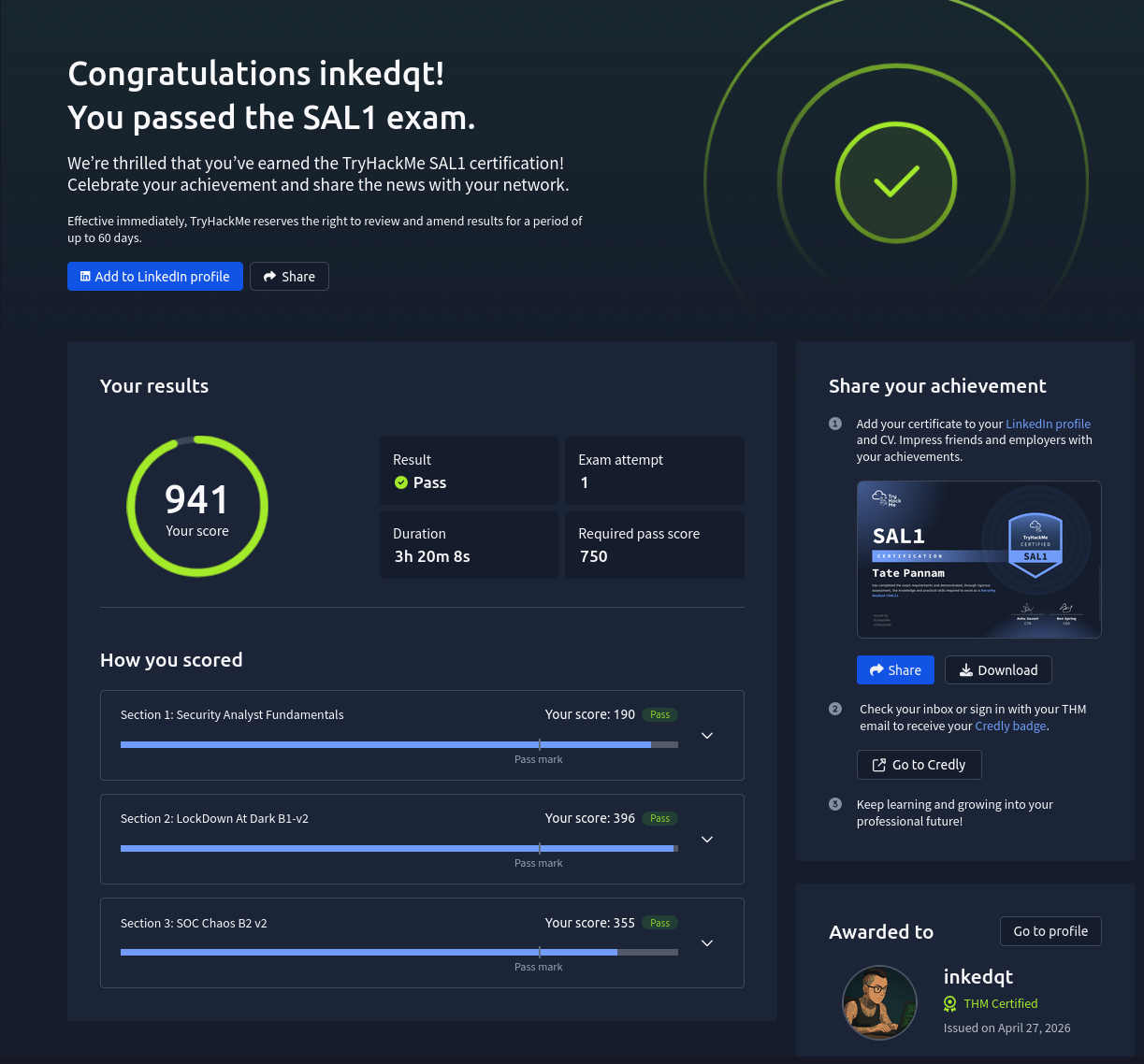

SAL1 first attempt review — 941/750, zero misclassifications, and some honest thoughts on where the exam shines and where it falls short.

TryHackMe SAL1 — Passed First Attempt, Here’s What Actually Matters

SAL1 is TryHackMe’s entry-level blue team certification. It’s aimed squarely at people starting their SOC journey — alert triage, incident classification, escalation decisions, case reporting. No memory forensics, no deep DFIR, no rabbit holes. You’re sitting in a simulated SOC, alerts are coming in, and your job is to work through them like an analyst on shift.

I passed first attempt with 941 out of a required 750. Here’s an honest breakdown of the experience.

The Learning Path Problem

I’ll be upfront — SAL1 sat at 69% complete for months before I ever touched the exam. The learning path follows the HackTheBox model: read a few pages of content, do a simple hands-on exercise to reinforce it, repeat. It’s better than a wall of text, but as soon as I found CyberDefenders and BTLO — platforms that drop you into a lab with minimal guidance and make you figure it out — I struggled to go back to a structured pathway.

That’s not a knock on the format. Plenty of people learn well that way. It’s just not me. I need to be discovering things, not following a set path. The material sat unfinished until I won a 50% discount during the SOC-mas Blue Team event, which is honestly the only reason I pulled the trigger on the exam voucher. At full price, given my learning style, I’m not sure I would have.

That’s not a knock on the format. Plenty of people learn well that way. It’s just not me. I need to be discovering things, not following a set path. The material sat unfinished until I won a 50% discount during the SOC-mas Blue Team event, which is honestly the only reason I pulled the trigger on the exam voucher. At full price, given my learning style, I’m not sure I would have.

If you’re the same kind of learner — hands-on, lab-first, gets bored following structured content — know that going in. The exam is very doable without completing the pathway if you’ve put the hours in elsewhere.

Going In With a Template

Before launching the first lab I’d done TryHackMe’s free Phishing Unleashed SOC sim and learned pretty quickly that my normal investigation notes weren’t going to cut it for AI grading. The exam reports are scored by an AI against a rubric — classification, escalation decision, case report quality — and it rewards structure.

I went in with a prepared reporting template built around the 5Ws and the SAL1 alert reporting criteria:

- Clear TP/FP classification with reasoning

- Escalation justification or explanation of why it’s not required

- Affected entities — who, what, where, when

- Full IOC list — network indicators, host indicators, hashes

- Recommended remediation actions

- Optional MITRE ATT&CK mapping

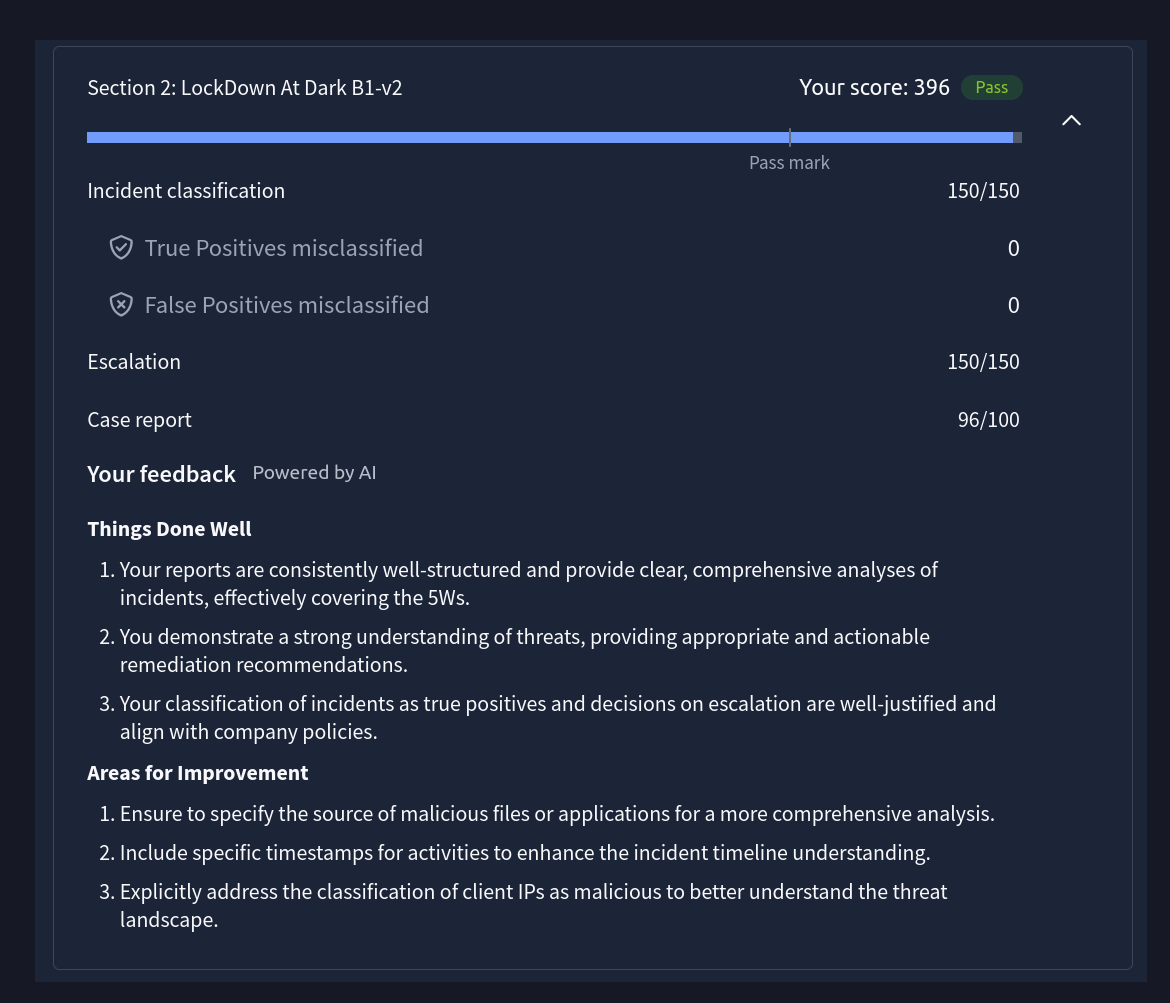

Having that template ready before the clock started meant I was never staring at a blank text box figuring out what to write. I just filled in the structure. Every single report across both labs came back at 96-100/100. The template did its job.

The Exam Experience — Lab 1

The labs run on a two-hour timer that starts the moment you launch. Alerts don’t all spawn immediately — they come in over roughly the first hour. I’d seen advice on Reddit suggesting you spawn the lab, walk away for 40-45 minutes, and come back once the full alert queue has loaded so you never close something early without the full picture. That’s the right call.

What I actually did in lab 1 was spend the first part investigating in Splunk while alerts were still spawning. By the time the queue was full I’d already mapped the entire attack chain. The alerts when they arrived basically confirmed what I’d already found — but more than that, the Splunk work surfaced details that never appeared in any alert ticket at all. Artefacts that strengthened my reports and added context the AI grader clearly rewarded.

The instinct to investigate beyond what the alert hands you is worth keeping, even in an exam that technically doesn’t require it. It’s the difference between a report that describes what happened and one that explains it.

The downside was timing — that left me with less than an hour to write and submit reports for every TP in the chain. Doable, but more rushed than it needed to be.

The Exam Experience — Lab 2

Lab 2 I changed approach. I spawned the lab, stepped away, and came back at the one-hour mark. While alerts were spawning during that wait time I was doing the busywork — checking each IP against the threat intel platform as alerts appeared, correlating source IPs to internal users from the asset inventory, noting each alert ID with its key fields. By the time the last alert spawned I had a full set of pre-researched notes for every alert in the queue.

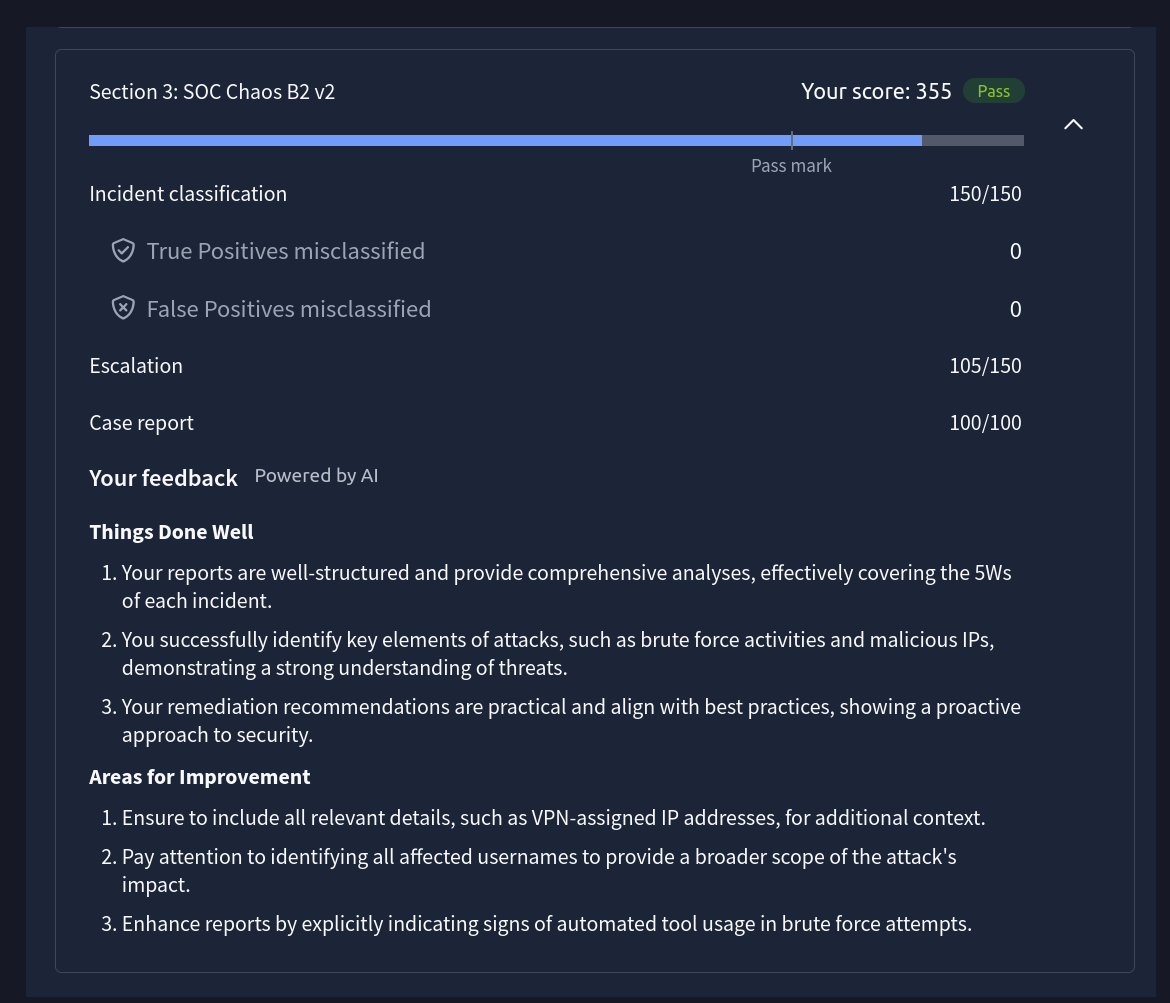

That upfront work is what made lab 2 feel clean. When it came time to write reports the classification thinking was already done — I just needed to confirm the chain, identify the TPs, and fill the template. The SOC handover notes mattered a lot in this lab — several alerts that looked suspicious in isolation were legitimate activity explicitly documented in shift context. Reading handover carefully isn’t optional.

On Escalation Calls and Exam Ambiguity

One thing worth flagging for future candidates — the escalation rules are written precisely and the exam expects you to apply them precisely. In lab 2 I dropped points on escalation for alerts I’d correctly classified as TPs. The detection rule explicitly stated no escalation without a confirmed successful login. The attacking IP didn’t match the established compromise chain. I followed the rules as written.

The grader apparently wanted them linked. I’d make the same call again with the same information. The lesson isn’t to second-guess yourself — it’s that when exam design is slightly ambiguous, you can follow the written rules correctly and still lose points. That’s an exam design issue, not an analyst error. Go in knowing that escalation decisions will be scrutinised and document your reasoning clearly even when you’re calling no escalation.

The Meta Strategy

If you want to maximise your score and minimise stress:

Spawn the lab. Use the wait time productively — check each alert as it appears, run IOCs through threat intel, cross-reference IPs against the asset inventory, note key fields per alert ID. When you come back you have the full queue and a set of pre-researched notes. Read the SOC handover notes carefully — they exist to give you context that changes how you classify alerts. Map the attack chain before touching a single report. Work TPs chronologically. Submit. The exam closes when the last TP is submitted — you don’t need to touch FPs.

Honest Feedback on the Exam Design

This is the part I feel most strongly about, so I’ll say it plainly — and with the caveat that having just come through BTL1 and CDSA, I’m probably holding SAL1 to a standard it was never designed to meet.

The investigation depth is lighter than I expected. Most of what you need to write a complete, accurate report is contained in the alert ticket itself. That’s not necessarily a flaw — at L1, triage speed and classification accuracy matter more than deep forensic pivoting — but it does mean the exam feels closer to structured decision-making than active investigation.

A small tweak would go a long way. Make the analyst find one key detail rather than handing everything over in the ticket. One log pivot, one correlation, one artefact worth poking at. The VM environment has interesting content in it — it’s just not being used for assessment. Requiring a single investigative step per lab wouldn’t change what the cert is testing, it’d just make the test more realistic.

That’s a product suggestion, not a failing. The core exam — classification, escalation, reporting — is well designed and the AI grading is genuinely good.

Is It Worth It?

Yes — and more than I expected going in.

SAL1 tests the fundamentals of analyst thinking: classify correctly, escalate appropriately, document clearly. Those skills matter at L1 and the exam format actually tests them rather than just asking you to recall definitions. The scenario-based format, the handover notes, the threat intel lookups, the escalation edge cases — it’s a realistic enough slice of what early SOC work looks like to be useful preparation.

Real L1 SOC work does involve a lot of alert triage. That’s just the role. But it also involves pattern recognition across a shift, building context over time, knowing when something that looks routine is actually the start of something bigger. The investigation instinct you build through BTLO and CyberDefenders feeds directly into that — SAL1 is the structured framework that sits on top of it.

If you’re building toward an L1 role in the Australian market — SAL1 is a solid, practical cert that hiring managers recognise. Combine it with hands-on lab work and you’ll walk into the exam with more than you need, and walk into interviews with more than the cert alone suggests.

The cert alone won’t get you hired. The thinking it asks you to demonstrate might.